When I was first introduced to graph databases I had a hard time trusting them. When a node gets created, where does it go? There are no tables in graph databases, so I was missing that loving embrace, I mean arrangement of rows and columns. It made me a little paranoid, like what if I lost them? I built some projects storing all the data in Postgres first, so if anything happened to the graph I could rebuild it. That warm protective security blanket is something we’re bringing back. We’re taking another walk through a door and arranging all nodes and properties of each type in a set of Vectors (well, one per Shard of course).

Each Shard has a pair of locks as well as NodeTypes and RelationshipTypes:

class Shard : public seastar::peering_sharded_service<Shard> {

private:

seastar::rwlock rel_type_lock;

seastar::rwlock node_type_lock;

NodeTypes node_types;

RelationshipTypes relationship_types;

In the previous post we saw that when a new type of node gets created, we lock the node_types so we won’t dwell on that, instead let’s talk about what is in NodeTypes. I need a map for the string to type_id storage, and the reverse but I can use a vector for the reverse. I need a spot to keep track of the node ids by key, and the reverse. I need a place to keep track of their properties. In the past I had just saved a key/value map for each node to store their properties, but repeating those string keys seemed wasteful. So I changed the storage to a property key dictionary and keeping the properties in a vector with integer keys, but that seemed wasteful as well. In this version we are going for a more columnar style approach, but we’ll talk about the details of Properties later.

I do not need to keep track of the node object itself since I can build it as requested from its parts. I do need a spot for the outgoing and incoming relationships, but we’ll talk about those at another time as well. I definitely need to keep track of deleted ids, but I’m not entirely sure if I need to keep track of the created ids themselves. I think I can use the counts of each type minus the deleted ids to get the valid ids, so we won’t store those either. This is what we’re left with:

class NodeTypes {

private:

std::unordered_map<std::string, uint16_t> type_to_id;

std::vector<std::string> id_to_type;

std::vector<tsl::sparse_map<std::string, uint64_t>> key_to_node_id;

std::vector<std::vector<std::string>> keys;

std::vector<Properties> node_properties;

std::vector<std::vector<std::vector<Group>>> outgoing_relationships;

std::vector<std::vector<std::vector<Group>>> incoming_relationships;

std::vector<Roaring64Map> deleted_ids;

The idea is to try to use as little memory as we can while still getting great performance. We’ll see if we achieve that when we get to our first “release”. To see how some of these pieces fit, let’s try creating a new node. We’ll need an HTTP endpoint at /db/{graph}/nodes/{type}/{key} and a new handler for it.

class Nodes {

class PostNodeHandler : public httpd::handler_base {

public:

explicit PostNodeHandler(Nodes& nodes) : parent(nodes) {};

private:

Nodes& parent;

future<std::unique_ptr<reply>> handle(const sstring& path, std::unique_ptr<request> req, std::unique_ptr<reply> rep) override;

};

Let’s setup the route next. Actually let’s do Get as well as Post. I’ll skip the code but they are basically identical:

void Nodes::set_routes(routes &routes) {

auto postNode = new match_rule(&postNodeHandler);

postNode->add_str("/db/" + graph.GetName() + "/node");

postNode->add_param("type");

postNode->add_param("key");

routes.add(postNode, operation_type::POST);

Now we can create the handle method. First thing we’re going to do is validate the type and key are both there:

future<std::unique_ptr<reply>> Nodes::PostNodeHandler::handle([[maybe_unused]] const sstring &path, std::unique_ptr<request> req, std::unique_ptr<reply> rep) {

bool valid_type = Utilities::validate_parameter(Utilities::TYPE, req, rep, "Invalid type");

bool valid_key = Utilities::validate_parameter(Utilities::KEY, req, rep, "Invalid key");

if(valid_type && valid_key) {

If the body of our POST request is empty, then we’ll create an Empty node. The NodeAddEmptyPeered method returns the newly created id. If it’s greater than 0 then everything went fine, so we build a temporary Node object and convert it to json. If things didn’t go according to plan, we return a bad request.

if (req->content.empty()) {

return parent.graph.shard.local().NodeAddEmptyPeered(req->param[Utilities::TYPE], req->param[Utilities::KEY])

.then([rep = std::move(rep), type = req->param[Utilities::TYPE], key = req->param[Utilities::KEY]](uint64_t id) mutable {

if (id > 0) {

Node node(id, type, key);

rep->write_body("json", json::stream_object((node_json(node))));

rep->set_status(reply::status_type::created);

} else {

rep->write_body("json", json::stream_object("Invalid Request"));

rep->set_status(reply::status_type::bad_request);

}

return make_ready_future<std::unique_ptr<reply>>(std::move(rep));

});

}

We dove in to NodeAddEmptyPeered on the previous post but never got into NodeAddEmpty, so let’s do that now. We start off with an internal node id that is the size of our current array of that type and 0 for our external id indicating we are in an error condition.

uint64_t Shard::NodeAddEmpty(uint16_t type_id, const std::string &key) {

uint64_t internal_id = node_types.getCount(type_id);

uint64_t external_id = 0;

Next we check to see if that node type-key combination already exists in our Graph since it is supposed to be unique.

auto key_search = node_types.getKeysToNodeId(type_id).find(key);

if (key_search == std::end(node_types.getKeysToNodeId(type_id))) {

If it doesn’t exist, then we need to check if we have any deleted nodes of that type. We are going to be recycle internal node ids as they get deleted. We won’t compact them, at least not now, maybe in the future. If we do have deleted nodes we’ll grab the one with the lowest id for our internal id and calculate our new external id which we’ll return at the end. We’ll replace the key of the deleted node

if (node_types.hasDeleted(type_id)) {

internal_id = node_types.getDeletedIdsMinimum(type_id);

external_id = internalToExternal(type_id, internal_id);

// Replace the deleted node and remove it from the list

node_types.getKeys(type_id).at(internal_id) = key;

node_types.addId(type_id, internal_id);

} else {

external_id = internalToExternal(type_id, internal_id);

// Add the node to the end and prepare a place for its relationships

node_types.getKeys(type_id).emplace_back(key);

node_types.getOutgoingRelationships(type_id).emplace_back();

node_types.getIncomingRelationships(type_id).emplace_back();

node_types.addId(type_id, internal_id);

}

The last thing we do is add the key and external id to the map, and return the newly created or recycled external id.

node_types.getKeysToNodeId(type_id).insert({key, external_id });

}

return external_id;

Back in our main, we’ll add the Nodes handler (remember we wrote an endpoint for POST and one for GET) to our server:

Nodes nodes(graph);

server->set_routes([&nodes](routes& r) { nodes.set_routes(r); }).get();

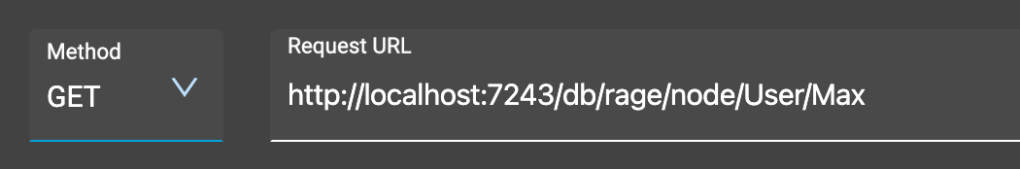

Let’s start this puppy and see where we’re at by sending a post request via Advanced Rest Client:

and what do we get:

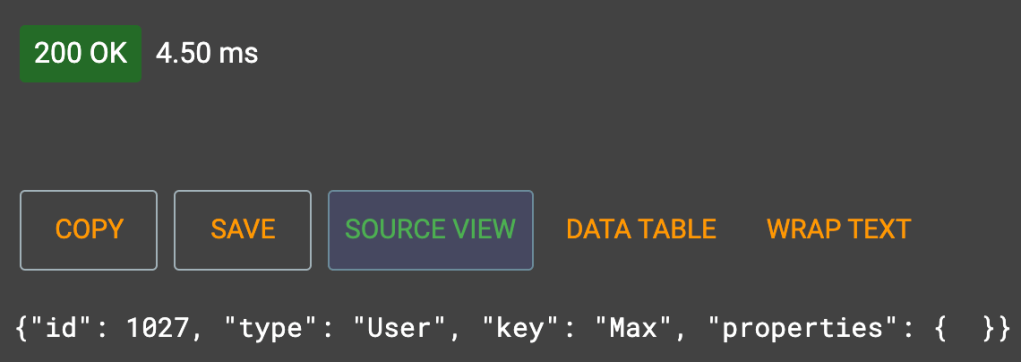

Exactly what we expected. The node was created, we get a 201 status code and node as a JSON object. Now let’s try to get it back from the server using GET:

…and what do we get back? Exactly the same thing, except now its a status 200 of course.

At this point we’ve built a Key Value store! Well…an overly complicated one, but we can write values and get them back if we know the exact key and value we wrote… so it’s a terrible KV store. We need to add properties. We’ll tackle that next so stay tuned for the next installment.