About eight years ago, I wrote a little blog post on dynamic decision trees. If you have the time, go back and read that and the follow up posts on the subject. If not, I’ll summarize. Instead of building a Rules Engine to make decisions, I built a dynamic traverser that evaluates logic on the fly. This enables us to change how the decisions are made any time. We can also version the trees as we change it and create completely new trees for any complex decision making. If we don’t have enough information to get to a conclusion, it doesn’t give up or make something up, instead it asks us a question we must answer to continue down the path.

I bring up this relic from the past because I’m still trying to wrap my head around the value of “Context Graphs”. Which are supposed to be graphs that capture the how, when and why decisions were made instead of what decision was made. The idea being to capture “decision traces” which then allow an AI agent to see precedents (how similar problems were solved) and apply those same rules to new situations. The first decision traces would be made by people, and then the agents could learn and take it from there. So every time you want a decision answered, your agents would read all the previous decisions, analyze them, and make a new decision. But why? If we made a policy change, would the AI agents now ignore all the previous decisions and utilize the new policy? Would we have to repopulate the graph with human made decisions following the new policy so the agent understood there was a change?

If that’s the case, then why do we need to keep track of how every decision was made? Can we make our life simpler by not storing the how, bypassing the when with versioning, and just sticking to storing the why? Would we need agents at all or could we do it all from just a query to the database? Think about this for a minute while I tell you about the National Comprehensive Cancer Network (NCCN).

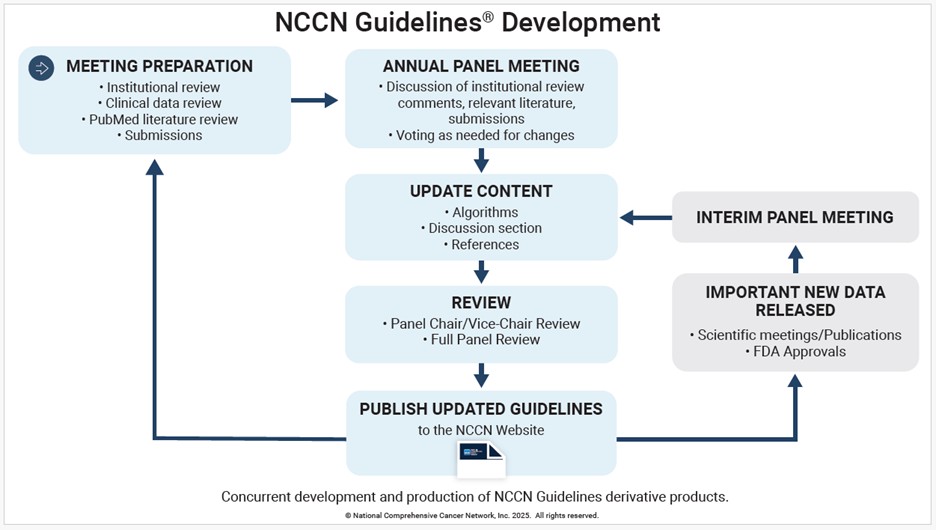

In a nutshell, the NCCN is a non-profit, made up of the best cancer centers in the nation that helps millions of people facing cancer by creating guidelines for giving patients the best evidence based care available. Every year, panels of these specialists meet to review the new findings in their specific field and publish guides for how doctors should treat their patients. What procedures to use, what test results to gather, what drugs to prescribe, what surgeries to perform, etc.

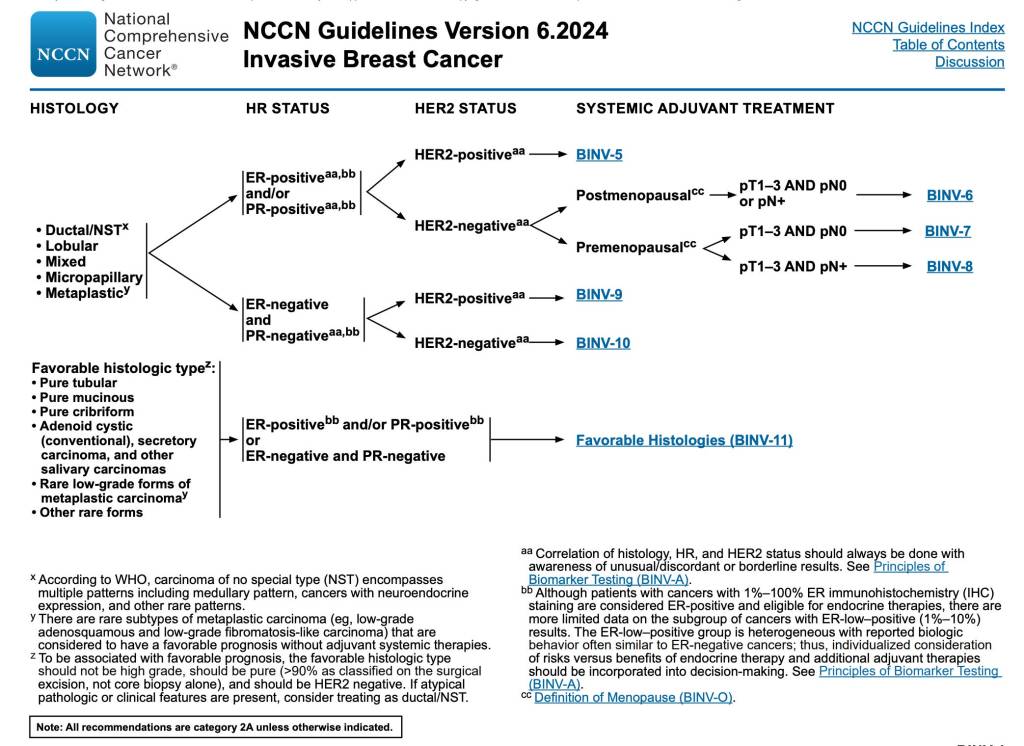

The published guidelines look like this:

They are decision trees. Instead of having every doctor treating cancer reading all the latest new studies, all the findings in their field and adjusting their treatment plans, they can just look at these guidelines and follow the decision trees. They aren’t the only ones, there are others entities that publish guidelines as well (see here, and here). That saves a lot of time and makes sense for doctors. Why are we going to make agents relearn decision traces over and over again for every decision. That seems like a great way to set money on fire by eating up your AI tokens for no good reason.

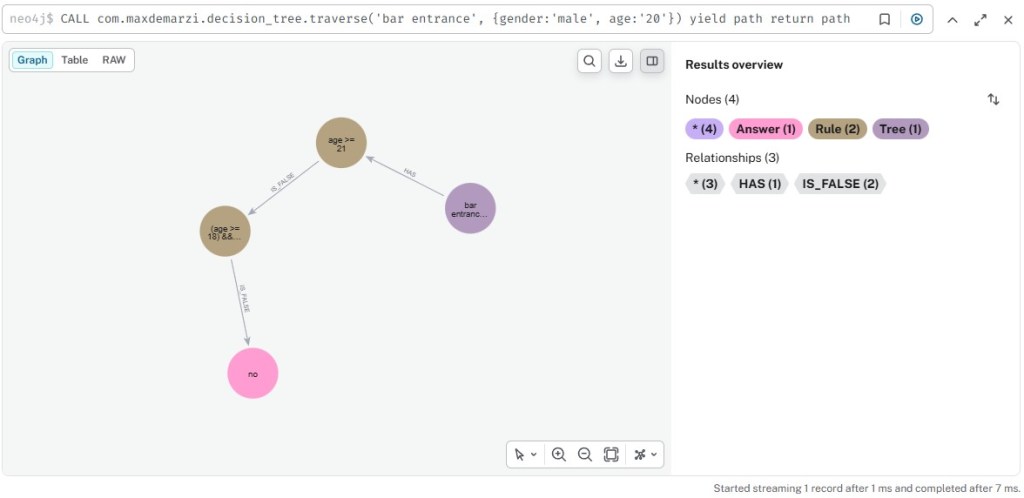

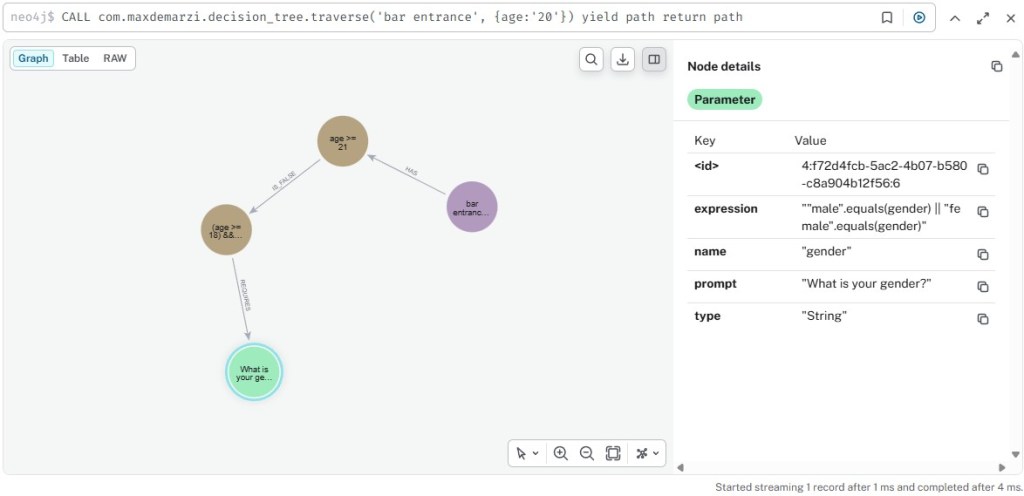

So let’s build decision graphs instead of context graphs. Just like before we create a simple decision tree for getting into a Bar. They are having “ladies night” tonight, so women 18 and up can enter, but men have to be 21 to be allowed inside. So we create two rules. The first is simple, if age >= 21 then yes. The second is if age >= 18 AND gender = ‘female’, then yes. If the first rule triggers a no, then the second rule is applied. A man walks up to the bouncer, the bouncer looks at their id and sees they are 20 years old, according to the policy being followed tonight, this person cannot enter the bar.

Pay special attention to the bottom right of that image. “Completed in 7 ms.”. That’s seven milliseconds. Total AI Token cost? Zero. Now a young lady walks up to the bouncer.

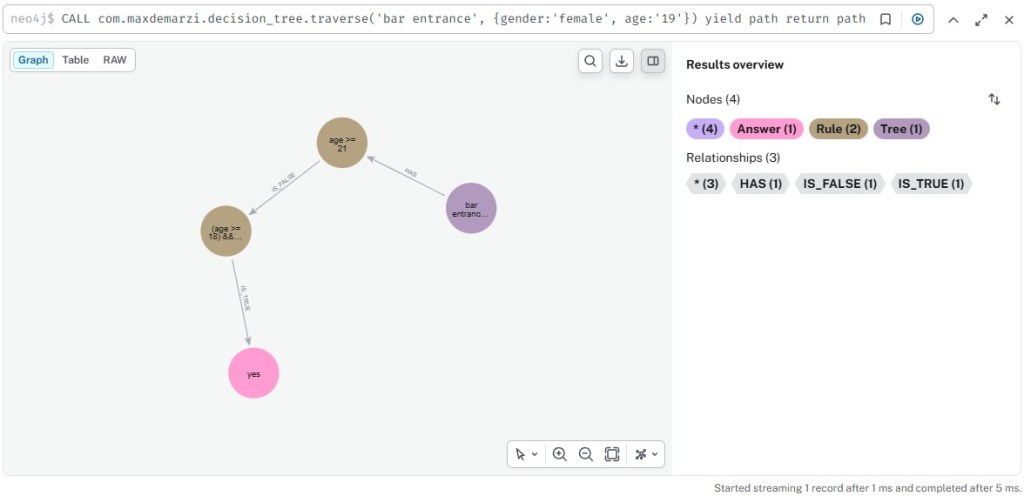

She gets a “yes” and is allowed inside. Next a 20 year old walks up to the bouncer. But a 20 year old what? The bouncer isn’t sure, so they ask “What is your gender”? Once they get an answer, they will rerun the query with the additional parameter and either get a decision or another question to ask.

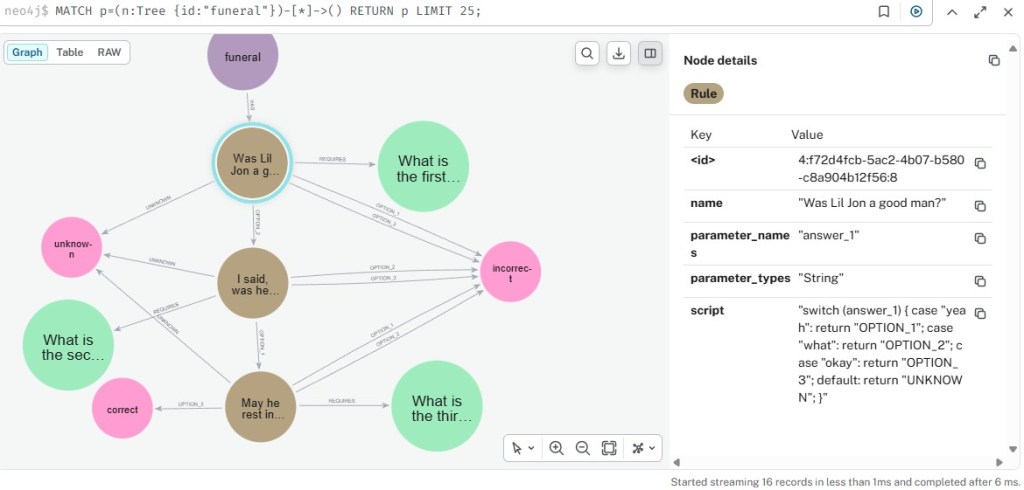

It works for multiple choice answers as well, not just yes or no questions. This one follows the terrible Lil Jon example I used in part 2 of the previous series, but it doesn’t matter. The decisions trees can be as big as you want and as complicated as you want, they will still be tiny graphs that take no time at all to traverse.

If the decision tree changes because of a business, regulatory, or whatever reason… then no problem. Rename the tree by adding a date to the end of it, and create a new tree with the new rules, or if you don’t care about keeping the when, then just make your changes to the tree as-is. If we can model something as complicated as how to treat cancer using decision trees, we can model credit line increases, underwriting guidelines, insurance approvals, and just about anything else you can think of.

If you’ve read the previous blog posts, you already know how the trick is done. I am using the “Neo4j Traversal API“. It allows us to specify in Java, reasons to traverse one way or another along a path. In our case we use the Janino embedded Java compiler to evaluate Java code snippets saved as node properties in our Rule and Parameter nodes. We are no longer just “pattern matching” like Cypher, but using any custom logic we want to “reason” about which direction we want to go. This is a power that Cypher does not have. This is a power that GQL does not have. You can do this in Gremlin only if the host language allows using Lambdas, but you can’t do it in AWS Neptune since Lambdas are not allowed. You can do this in RageDB since we’re just using Lua and it can do anything… and you can also do this in Relational.AI with our PyRel query language.

We start by creating our node types, the equivalent of Labels in Neo4j

model = rai.Model("decision_tree")

InputNode = model.Type("InputNode")

Path = model.Type("Path")

Tree = model.Type("Tree")

Rule = model.Type("Rule")

Answer = model.Type("Answer")

Parameter = model.Type("Parameter")

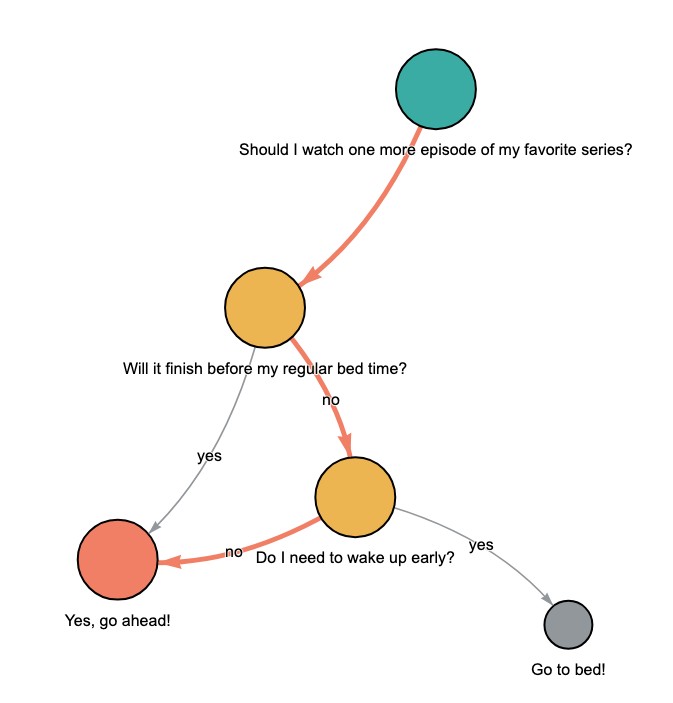

From here let’s define a decision tree that helps us decide if we should stay up late and watch tv or go to bed already.

trees = {1: "Should I watch one more episode of my favorite series?"}

rules = {2: "Will it finish before my regular bed time?",

3: "Do I need to wake up early?"}

answers= {4: "Yes, go ahead!",

5: "Go to bed!"}

rules_expressions = {2: (["episode_length", "minutes_until_bedtime"], lambda p1, p2: p1 < p2),

3: (["need_to_wake_up_early"], lambda p: p == "yes")}

rule_case_labels = {True: 'yes', False: 'no', None: ''}

# (from_id, to_id): condition

paths = {(1, 2): None, (2, 3) : False, (2, 4): True, (3, 4) : False, (3, 5): True}

Notice we are using lambdas in our rule expressions. These will be evaluated at traversal time when we know the values for their corresponding parameters. We feed these objects to a function to actually create our graph:

def create_input_nodes(dict, SubType):

for id, label in dict.items():

with model.rule():

InputNode.add(id=id).set(label=label).set(SubType)

create_input_nodes(trees, Tree)

create_input_nodes(rules, Rule)

create_input_nodes(answers, Answer)

We can create Rules in our model that will evaluate the rules_expressions we defined above dynamically based on the parameters we pass in.

for id, (expression_params, expression_func) in rules_expressions.items():

with model.rule(dynamic=True):

r = Rule(id=id)

with expression_func(*[Parameter(name=p).value for p in expression_params]):

r.set(condition_value = True)

With that all set, we can create rules for an “Active Path” from our possible paths that traverses the paths that are “true”

ActivePath = model.Type("ActivePath")

with model.rule():

p = Path()

p.from_.condition_value.or_(False) == p.condition

p.set(ActivePath)

with model.rule():

p = Path()

with model.not_found(): p.condition

p.set(ActivePath)

So when we dynamically pass in our tree and parameter values:

tree = "Should I watch one more episode of my favorite series?"

parameters = {"episode_length": 45,

"minutes_until_bedtime": 40,

"need_to_wake_up_early": "no"}

…and use those parameters in a query:

with model.rule(dynamic=True):

for name, value in parameters.items():

Parameter.add(name=name).set(value=value)

We can get a quick answer and a pretty representation of our tree and what path we took.

Now imagine you are able to collect the decision making capabilities of particular subject matter experts, like NCCN did for the doctors that specialize in Cancer. Imagine you can do it for a bunch of different roles specific to your business. What if it could also prescribe what you should be doing instead of what you are doing now? What if it could predict what you will be doing next? What could you do with that kind of decisioning power? Do you want to find out?